Have you seen any of those viral videos wherein political leaders were apparently saying absurd things? After a matter of seconds you can instantly see that the videos are nothing but a hoax thanks to the bad lip-syncing technology. However let’s imagine that the same videos were made with better accuracy, and the speakers on film were talking in a way that was perceived as controversial, instead of in a light hearted joking way. How will the public be able to differentiate between what is real and what is fake, and more importantly how would this effect the public’s reaction?

We are already aware of the damaging consequences of fake news, how they are liable to cause permanent damage to the reputation of people when fed to the impulsive readers. But with fake or rather Deepfake videos, the damage done could be more pervasive, as even the most educated of people could easily be fooled by them.

The term “Deepfake” for such videos comes from the technology that is behind this ‘disinformative weapon’ – deep learning algorithms. Earlier, fake videos were easily recognisable owing to the untimed synchronisation of the audio and mouth movements. However, when deep learning is producing meticulous outputs, by also taking out the enormous efforts and errors of manual video editing, such videos have gained more potential to create chaos during critical times like those of elections.

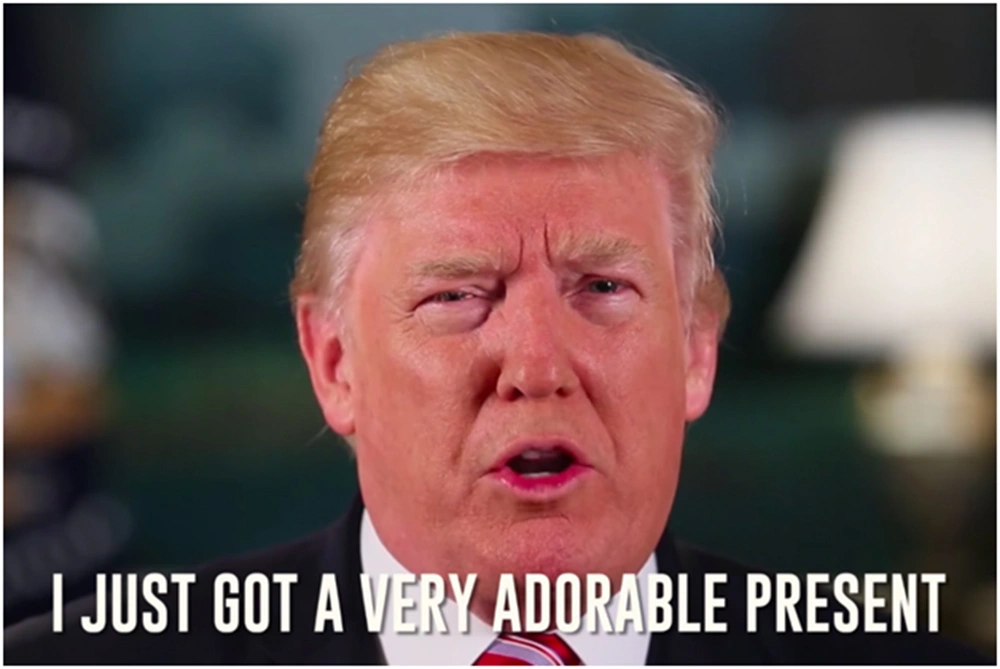

Some of the most used methods to create such Deepfake videos include the use of a recurrent neural network that automatically transforms an audio file to a near-perfect mouth animation. The resultant media is then embedded with mouth texture which in the end is added to the retimed video frames. A little preview of how this technology could work was published by BuzzFeed a few months ago.

https://www.youtube.com/watch?v=cQ54GDm1eL0

And now when algorithms are adapting at an exponential pace, the final outputs have started to blur the line of reality. Here is another video that shows the technology at work.

https://www.youtube.com/watch?v=9Yq67CjDqvw

Evidently, the tech has created major concerns across the globe. Many of the political leaders, including the US ambassador in Moscow and Russia- John Beyrle and Michael McFaul respectively, have even affirmed that they have already been victims of such deceptive videos.

In the regards, Andrew Grotto, an international security member at the International Security centre in Stanford University in California has said, “Within a year or two, it’s going to be really hard for a person to distinguish between a real video and a fake video.” He also noted about the technology that it “will be irresistible for nation states to use [it] in disinformation campaigns to manipulate public opinion, deceive populations and undermine confidence in our institutions.”

To deal with such videos, the US Defence Advanced Research Projects Agency has started a four-year program to find and develop technologies for fake videos detection. The agency is already two years in the research and it is hard to say if they will be able to match the pace of development with that of Deepfake technology.

Resources:

Dotsquares wins “Highly Commended” for AI & Security at the 2026 awards. Discover how we build AI and ML solutions with a strong focus on security and real-world impact.

Keep ReadingDotsquares defines 2026 as a year focused on partnership, collaboration, and delivering greater value through strong relationships.

Keep ReadingDiscover why API testing automation is essential for modern applications. Learn its benefits, tools, challenges, and how automation enhances API development.

Keep Reading