PUBLIC CONCERN OVER AI COULD LEAD TO A CONCERTED BACKLASH: RSA REPORT

Google employees aren’t the only people concerned over the use of AI in crucial areas like the military. The Royal Society for the encouragement of Arts, Manufactures and Commerce or RSA has recently released a report named ‘Artificial Intelligence: Real Public Engagement’ that raises the concern of concerted backlash from the public at large.

The report was based on a poll named YouGov that was recently commissioned by RSA on 2,000 UK citizens. The participants were asked about their awareness of automated systems that are being used in a variety of areas and why they think doing so is ethical or not.

The findings were surprising as, as little 9% of the participants accepted that they knew of the AI’s involvement in the criminal justice system. Furthermore, 77% and 78% of the people reported that they were unaware of the AI-based automated decision making systems in workplace and immigration sectors respectively.

It is to be noted that the only area where the participants’ familiarity of automated decision systems are greater than their obliviousness is also the only sector that is the most regulated – Advertisement, social media, and search engines results. To which only 44% of the people are claiming unawareness; whereas in all the other sectors including healthcare, social support, and financial services, it was the complete opposite.

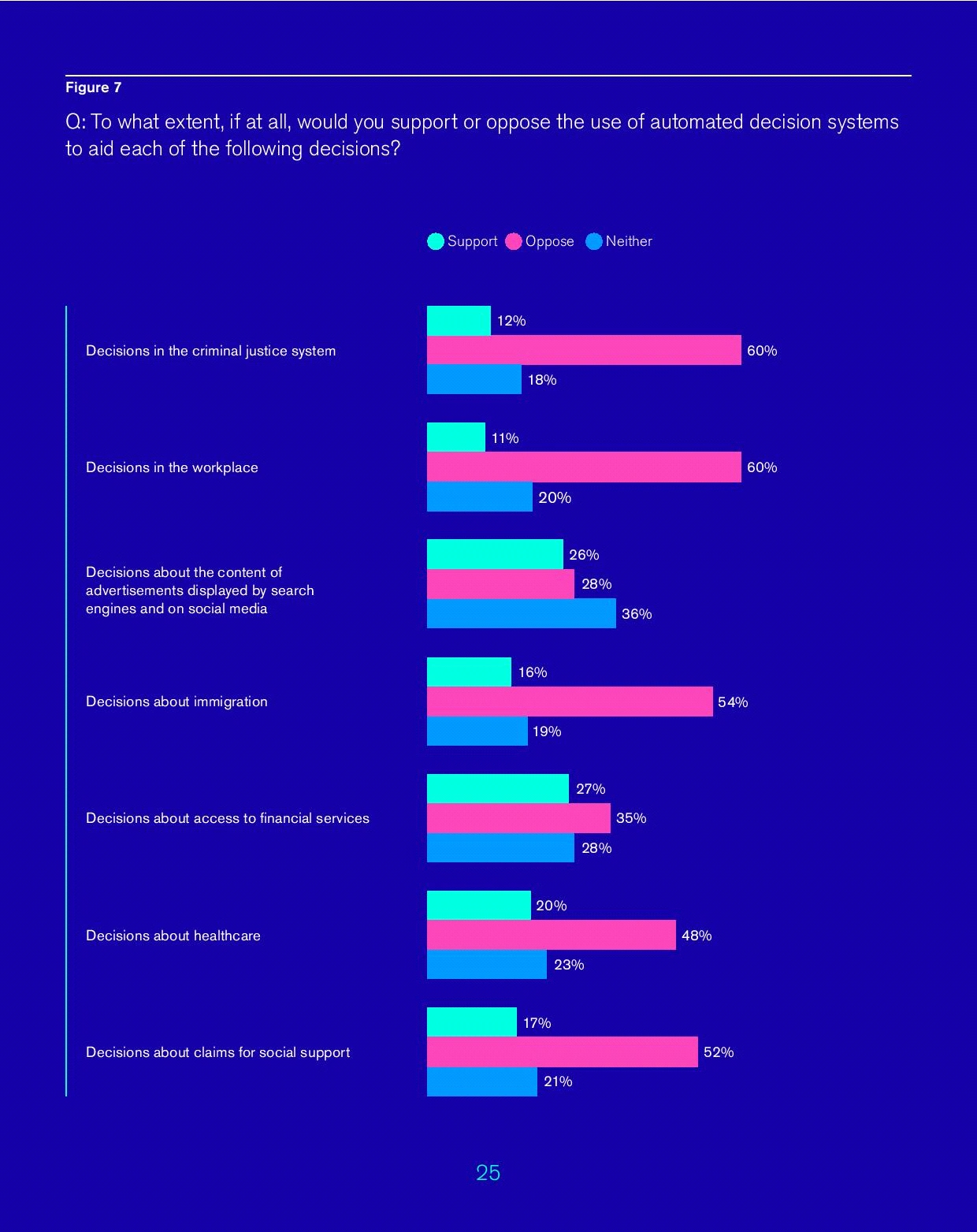

On an average, 47% of people oppose the use of AI in crucial decision-making systems

After noting down their knowledge on the subject, the poll then proceeded to ask the people of their acceptance of AI in the aforementioned critical areas. Here is the ratio of how many people have shown their support and lack of the same.

Source: RSA report Artificial Intelligence: Real Public Engagement

It was clear that most of the participants have opposing views for AI-based systems in areas like healthcare, immigration, workplace, and the justice system. The reasons they quoted for their lack of support varied from accountability issues to the dearth of emotions. To be precise nearly 60% of people credited lack of emotional intelligence as the chief reason for they don’t support AI’s use in the critical areas like immigration, while 31% acknowledged lack of accountability in making decisions on ‘morally challenging decisions’

How the public thinks, these things can be actually be improved?

Before the actual poll, nearly 84% of the participants have shown their acquaintance and support for the use of AI in self-driving cars, and digital assistants like Alexa and Siri. That means the majority of people are not against the AI technology per say, but against the use of the technology in areas that demand responsibility, clarity, and perhaps certain emotional leniency.

A classic example where lack of emotional intelligence can turn AI from being a human assistive technology to a bane, as often quoted by experts, is when you have to take your child to a doctor urgently and your AI-based loaned car refuses to unlock, because its algorithm detects that you are late by one day in paying the premium! So even if the inventors manage to implement no-bug algorithm with perfect transparency, use of AI in all the areas would always remain a nagging discomfort for humans.

Nevertheless the 36% of the participants accepted that they would be comfortable with automated decisions ‘if there was a right to request an explanation of the organisational processes undertaken to reach a decision with an AI system’, while 33% agreed that they would accept such decisions if companies were regulated and fined for failing in their automated systems auditing. On an average, nearly 21% of people have outwardly proclaimed their acceptance on the participation of AI systems, given there are common guiding principles for AI, for example, governments and companies that include greater public participation, should have the accountability settled through private frameworks of automated system monitoring.

Still, only 3% of people have suggested their complete comfort with AI-based systems, even if all the aforementioned and other measures are taken over time, to increase the accuracy of the decisions.

Sensing the statistics, it is clear that with raising awareness of AI’s utility in critical sectors, there could be a public uproar against it. In this regards, Matthew Taylor, the Chief Executive at RSA, has written, “Currently it can feel that the growing ubiquity and sophistication of AI is closely matched by growing public concern about its implications.” He also stated that “unless the public feels informed and respected in shaping our technological future, the sense will grow that ordinary people have no agency – a sense that is a major driver in the appeal of populism. At worst it could lead to a concerted backlash against those perceived to be exploiting technological change for their own narrow benefit.”

Resources

Related Post

Zoho is Splitting Activities Module: Know Why and How It Can Affect Your Business

Know how Zoho deprecating activities module is great for your business and the reasons why Zoho team announced this.

Keep ReadingStrategic Synergy: Harnessing the Potential of HubSpot's Co-Marketing for Success

The co-marketing program is your next step if you'd like to partner and co-create valuable content and foster business growth alongside HubSpot.

Keep ReadingEffective Strategies for Marketing and Promoting Your Adobe Commerce Store

With the perfect strategy for marketing, you can reach more users and increase engagement on your Adobe store.Check out our blog for top business strategies

Keep Reading